-

Target Test Prep 20% Off Flash Sale is on! Code: FLASH20

Redeem

4 Steps to Analyze your CATs (part 3)

Are you ready to get even more geeky on test data? :)

In the first two installments of this series, we talked about:

Part 1: Global executive reasoning and timing review

Part 2: Per-question timing review

If you havent already, start with part 1 of this series and work your way back here.

Lets continue with a deeper dive into the broad strengths and weaknesses revealed by your data.

Run your reports

Note: this article series is based on the metrics that are given in Manhattan Prep tests, but you can extrapolate to other tests that give you similar performance data.

In the Manhattan Prep system, navigate to your practice exam area and click on the link Generate Assessment Reports. The first time, run the report based solely on the most recent test that you just did; later, well aggregate data from your last two or three tests.

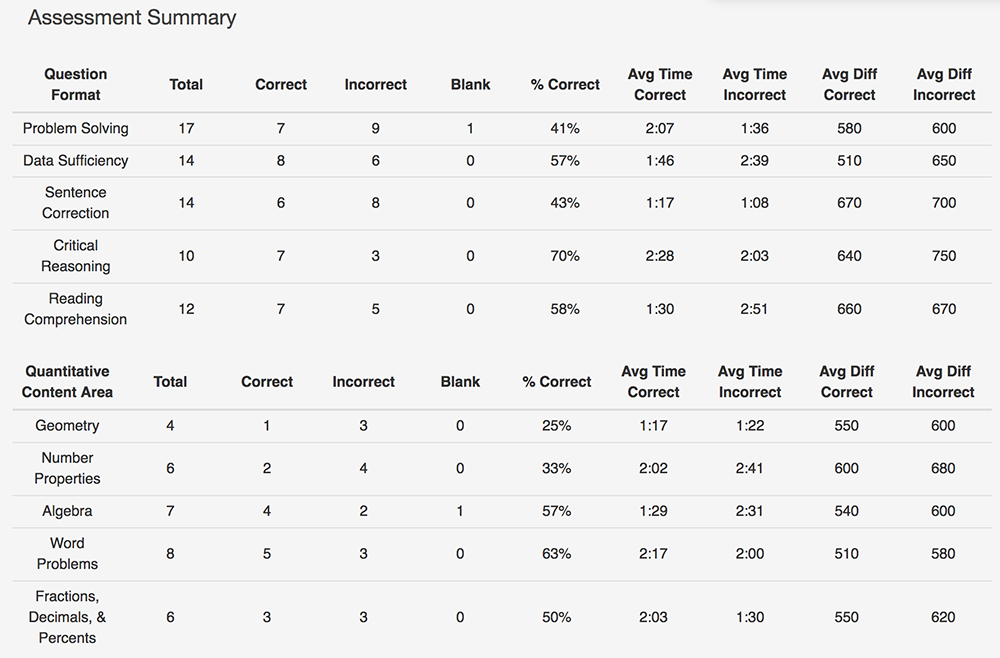

The Assessment Summary

The first report produced is the Assessment Summary; this report provides the percentages correct for the five main Q and V question types, as well as average timing and difficulty levels. It also gives a summary by overall Quant topic area. Heres an example; see whether you can spot any problem areas. (You may need to zoom in. Thats a lot of data!)

Most people will immediately say Oh, this student is much better at DS than PS. Why? Because the percentage correct is higher for DS.

Actually, that may not be an accurate takeaway for this particular set of results. Its crucial to compare three data points at once: the percentage correct, the time spent, and the difficulty levels.

Its true that the DS percentage correct is significantly higher. But look at that timing: the student is spending a lot longer on incorrect DS as well. (Almost a minute longer, on average, than correct DS!) Where does that extra time come from? Incorrect PS is averaging only about 1.5 minutes, so shes rushing on those.

Finally, check out those difficulty levels; shes actually answering much harder PS problems correctly! Whats concerning here is that the average difficulty for correct vs. incorrect PS is almost the sameand that shes rushing on incorrect PS.

Id tell this student to start looking for careless errorsis that rushing costing her points on PS? And if she is able to answer harder PS correctly, why is the DS difficulty languishing down at a 510 for the correct problems, and why is she spending so much longer on incorrect ones? She may have an issue with the DS process or strategies, or she may be second-guessing herself on DS (or both!). Id review the overall process and major strategies such as rephrasing and testing cases.

Plus, she needs to learn to cut herself off on some of the harder DS problems (that shes getting wrong anyway!). That average time of 2:39 for incorrect DS needs to come down. Shell need to investigate how to know that she should cut herself off on any particular problem. For example, if shes spent 1 minute but doesnt really understand what the question is asking, thats a great time to cut yourself off. Or if shes spent 2 minutes trying some kind of plan / approach and shes hitting a wall, thats not when you want to keep going and try something else. Thats when you cut your losses and move on.

These kinds of results should catch your eye:

- Percentages correct below approximately 50%, especially when coupled with lower average difficulty levels and higher average times. Note that I dont consider PS a straight weakness for the above student, even though the percentage correct is below 50%. Shell need to investigate further, but the other two data points indicate that the real culprit may be rushing and making careless mistakes.

- Average timing that is 30 seconds (or more) higher or lower than the expected average. The above student is edging high on correct CR. Incorrect RC might be highor it might be that she happened to miss a higher proportion of first questions for a passage (for which the time spent includes the initial reading time).

- A big discrepancy (more than 30 seconds) in average time for correct vs. incorrect questions of the same type; its normal to spend a little extra time on incorrect questions (because those are probably the harder ones!), but not a tonthat just means youre being stubborn.

Take a look at the data for the Quant sub-topics listed at the bottom of the report. Notice any issues?

Geometry has a low percentage correct but is also super-fast. Maybe she just hasnt studied geometry yet? (Indeed, I know that is true for this student. :) ) If you havent studied it yet, then ignore that data; it doesnt matter right now.

Number Properties also has a low percentage correct but quite high time on the incorrect ones. The difficulty level for those is also by far the highest, so it makes sense that shed have been tempted to spend extra timebut this is another example of where she needs to learn to cut herself off. She lost an average of 41 seconds on 4 problems, or 164 seconds total. Thats 2 minutes, 24 secondsmore than one entire problems worth! She could have used that time elsewhere.

Question Format & Difficulty

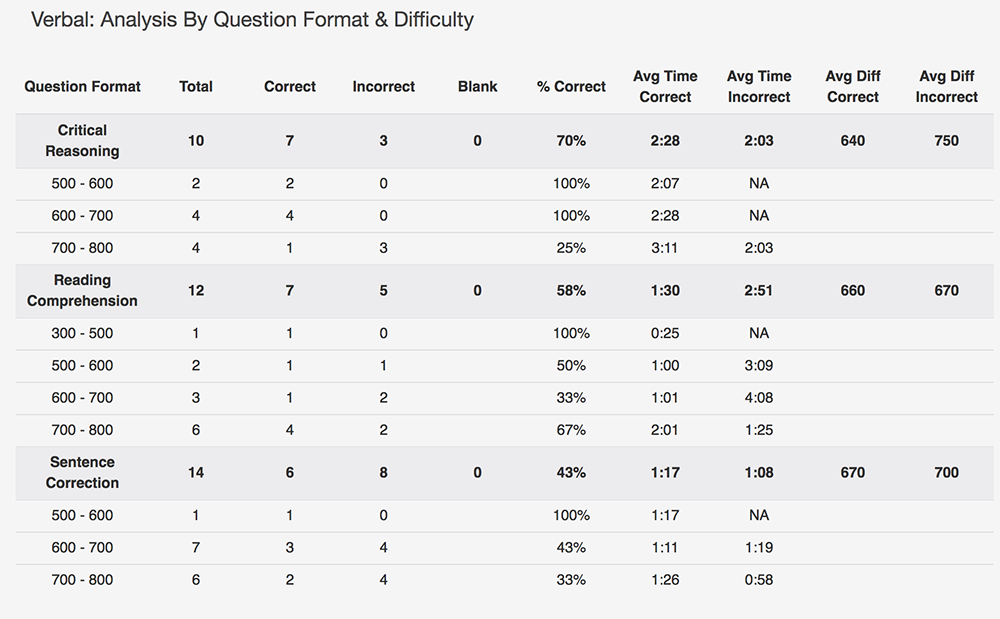

The second and third reports sort the questions by Question Format and Difficulty. Heres an example of the Verbal report:

Spot anything?

The student has a very high percentage correct on CR, though she did have to spend some extra time to get there. Where did that time come from? Incorrect SC and some RC. (The latter is hard to see from the report because it doesnt have a separate category for reading time. But 4 passages and 12 questions should typically add up to around 28 minutes and she spent just under 25 total on RC.)

It can be okay on verbal to have one question type take longer than average if one or both of your others are faster than averageand if that speed is not costing you points. Shell need to analyze to see whether she had any careless mistakes on SC or RC. Id start that analysis with SC; RC still had a higher percentage correct, but SC was lower.

It would also be a good idea for her to spend some time reviewing the correct CRs to learn how she can streamline her process. If she can still get those problems right in, say, 2m10s on average rather than 2m28s, thats a lot of time to spend elsewhere!

In the Question Format & Difficulty reports, look for:

- average timing that is 30 seconds (or more) higher or lower than the expected average, and whether that is happening on correct or incorrect questions, or both (and note that you may have to do some deeper investigating on RC, due to reading time)

- lower percentages correct on lower-level questions than on higher-level questions

As before, look at the data points across these categories together. You might be spending too much time on incorrect higher-level questions and not enough time on lower-level questions (that you then get wrong because youre rushing).

This student had a lower percentage correct for RC 600-700 than RC 700-800. The number of questions is small for 600-700, so this may not mean muchbut its something to keep an eye on.

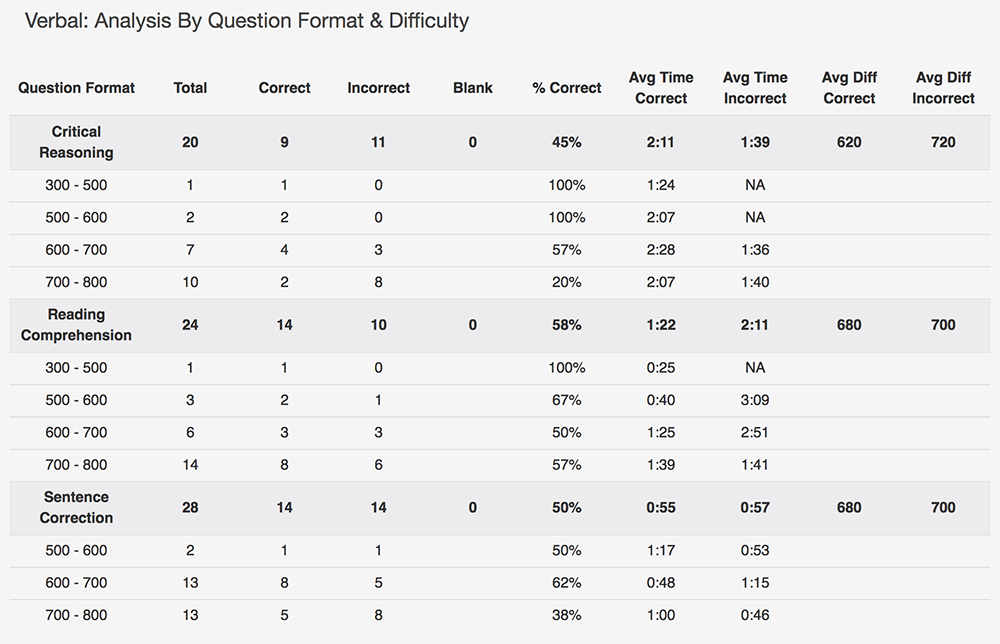

In fact, this is her third practice test, so she can go keep an eye on that right now. Re-run the reports using the last two tests vs. just this one. How does the picture change?

Wow! The RC percentage correct is the same, but CR changed drastically. And SC bumped up a bit. What are the messages here?

First, she significantly improved the percentage correct on CR from test 2 to test 3thats good in general, although her average timing also increased. Its possible that she really dove in and worked on CR between these two exams, and her efforts paid off in terms of accuracy but she still has some more work to do as far as efficiency is concerned.

She is still missing 600-700 level RC more than 700-800 level, so shell likely want to dive into those questions to figure out why. Is she falling into some traps? Are there certain question types that shes weaker at (and she happened to get those more at the 600-700 level on these two tests)?

One big concern is that the percentage correct for SC is trending down though the difficulty levels were about the same on both tests. Shell want to examine why when she does her individual question review.

Speaking of that individual question review, join us next time when well dive into the final batch of reports: Content Area & Topic data for both Quant and Verbal.

Recent Articles

Archive

- April 2024

- March 2024

- February 2024

- January 2024

- December 2023

- November 2023

- October 2023

- September 2023

- July 2023

- June 2023

- May 2023

- April 2023

- March 2023

- February 2023

- January 2023

- December 2022

- November 2022

- October 2022

- September 2022

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

- March 2022

- February 2022

- January 2022

- December 2021

- November 2021

- October 2021

- September 2021

- August 2021

- July 2021

- June 2021

- May 2021

- April 2021

- March 2021

- February 2021

- January 2021

- December 2020

- November 2020

- October 2020

- September 2020

- August 2020

- July 2020

- June 2020

- May 2020

- April 2020

- March 2020

- February 2020

- January 2020

- December 2019

- November 2019

- October 2019

- September 2019

- August 2019

- July 2019

- June 2019

- May 2019

- April 2019

- March 2019

- February 2019

- January 2019

- December 2018

- November 2018

- October 2018

- September 2018

- August 2018

- July 2018

- June 2018

- May 2018

- April 2018

- March 2018

- February 2018

- January 2018

- December 2017

- November 2017

- October 2017

- September 2017

- August 2017

- July 2017

- June 2017

- May 2017

- April 2017

- March 2017

- February 2017

- January 2017

- December 2016

- November 2016

- October 2016

- September 2016

- August 2016

- July 2016

- June 2016

- May 2016

- April 2016

- March 2016

- February 2016

- January 2016

- December 2015

- November 2015

- October 2015

- September 2015

- August 2015

- July 2015

- June 2015

- May 2015

- April 2015

- March 2015

- February 2015

- January 2015

- December 2014

- November 2014

- October 2014

- September 2014

- August 2014

- July 2014

- June 2014

- May 2014

- April 2014

- March 2014

- February 2014

- January 2014

- December 2013

- November 2013

- October 2013

- September 2013

- August 2013

- July 2013

- June 2013

- May 2013

- April 2013

- March 2013

- February 2013

- January 2013

- December 2012

- November 2012

- October 2012

- September 2012

- August 2012

- July 2012

- June 2012

- May 2012

- April 2012

- March 2012

- February 2012

- January 2012

- December 2011

- November 2011

- October 2011

- September 2011

- August 2011

- July 2011

- June 2011

- May 2011

- April 2011

- March 2011

- February 2011

- January 2011

- December 2010

- November 2010

- October 2010

- September 2010

- August 2010

- July 2010

- June 2010

- May 2010

- April 2010

- March 2010

- February 2010

- January 2010

- December 2009

- November 2009

- October 2009

- September 2009

- August 2009